ResearchResearch Topics

Media Adaptation

The basic concept behind dynamic media adaptation is the modification of high-quality media content, according to a variety of contexts or constraints (devices, networks, user preferences, mobility, etc), in order to provide a "guaranteed user experience" in a dynamic and real-time environment.

The concept of media adaptation has been present in research activities in various key forms for many years: transcoding (the conversion of media encodings, e.g. MPEG1 to MPEG2) and transmoding (the conversion of media types, e.g. graphics to video) are well established technologies both in software and hardware; methodologies using Quality of Service (QoS) have established a media adaptation basis for networks; and the editing media for different formats and audiences (everything from Cinema, in-flight movies, to the pre-9 o'clock watershed on television) has been until now an arduous and manual process performed to cover a wide audience; all represent various forms of media adaptation. Dynamic Media Adaptation aims to change all that by encompassing these technologies and more into a real-time streaming environment and adapting media directly for each individual user's requirements

Projects Involving Media Adaptation

- Intermedia; European Union FP6, (PI: Prof. Magnenat-Thalmann); 2006 - 2010

Media Compression

Media compression is an important topic; through the development of MPEG-1, 2, 4, and MPEG-4 Advanced Video Codec (H.264) have been significant advances in the development of compression providing improved quality for the same data rate and/or lower data rates for the same quality. Compression has expanded into areas such as 2D and 3D graphics in more recent years with technologies such as MPEG-4 BIFS (Binary Information For Scenes), BAP (Body Animation Parameters), FAP (Face Animation Parameters).

Research Interests

My interests lie in the area of video compression specifically focused on scalability; currently focus is towards wavelet coding of images.

Projects

- Scalable Wavelet Video, w/ Nortel Networks (PI: Prof. Dansereau), 2008

- Scalable Wavelet Video, w/ Nortel Networks (PI: Prof. Dansereau), 2007

Media Mobility

Media Mobility deals with several issues when device on which media is being presented is mobile and not attached to a specific network and has limited resources (in terms of memory, CPU processing capability, storage, and display). These restrictions and unknowns present new challenges in handling data. The other key issue in this area is User Profiles - when roaming (from device to device, network to network) we need

Research Interests

My research interests in this area are currently focused on the development of session mobility tools, enabling the transfer of complete sessions whilst a dynamic adaptation process is applied. I am also interested in the collection, storage, and presentation of user profile information at various hierarchical levels.

Projects

- MESSAGES, Ontario Research Fund (PI: Prof. Georganas), 2009 - 2013

- Intermedia, European Union FP6 (PI: Prof. Magnenat-Thalmann), 2006 - 2010

Medical Imaging

The use of 2D and 3D analysis for medical procedures is becoming more and more popular. Processing of X-Ray, CT (Computed Tomography), and MRI (Magnetic Resonance Imaging) into 3D models are just the beginning. New techniques are enabling full anatomical representations, pre/post operative analysis, intra-operative information systems and so on. Additional information, simpler procedures and improved analysis can improve patient health, diagnose problems faster, and shift patients from being in-patients (with the health issues and costs associated), to out-patients - reducing the financial burden on health systems, improving patient recovery, and allowing patients to feel more relaxed in their home environment.

In this area of research, I am focused on two topics:

- Femoral-Acetabular Impingement - dealing with a common orthopedic problem by looking at methods to simulate the interaction between the acetabular socket, and the femoral head

- Virtual Surgery Simulation - providing a simulation environment that permits interns for endoscopics surgery train in an environment as close as possible to the real thing

Femoral-Acetabular Impingement

Femoro-Acetabular impingement (FAI) is a common cause of hip pain" due to the abnormal joint shape [1,2]

Morphologic abnormalities in the femoral head neck junction or the acetabulum lead to abnormal contact forces occurring during extremes of hip motion [1,2]

We are motivated by the medical needs of FAI impingement detection for distance parameters. In the preoperative correction simulation, detecting the impinged regions of the joint and estimating the impingement level are our main goals in order to determine the location and amount of the correction required.

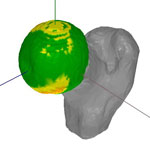

Detecting the position and the level of joint impingement is often a key to computer-aided surgical plan to normalize joint kinematics. So far most of the current impingement detection methods for ball-and-socket joint are not efficient or only report a few collided points as the detection results. Our research has lead to the devlepment of a novel real-time impingement detection system with rapid memory-efficient uniform sampling and surface-to-surface distance measurement feature to estimate the overall impingement.

Our system describes near-spherical objects in spherical coordinate system, which reduces the space complexity and the computation costs. The sampling design further reduces the memory cost by generating uniform sampling orientations. The rapid and accurate impingement detection with surface-to-surface distance measurement can provide more realistic detailed information to estimate the overall impingement on the ball-and-socket joint, which is particularly useful for computer-aided surgical plan.

Virtual Surgery Simulation

[1] Ganz R, Parvizi J, Beck M, Leunig M, Notzli H, Siebenrock KA (2003) Femo-roacetabular Impingement: A cause for osteoarthritis of the hip. Clinical Ortho-paedics and Related Research, No. 417, pp.112-120[2] Wisniewski SJ, Grogg B (2006) Femoroacetabular Impingement: An overlooked cause of hip pain. American Journal of Physical Medicine and Rehabilitation, Vol. 85, pp. 546-549

Virtual Reality

Virtual Reality has been a stable research field since the early 1960's when Ivan Sutherland invented the Head-Mounted Display (among other things); since then things have been a little up and down in terms of advances in the field. The ultimate goal for Virtual Reality is that we can use it and feel like we are in whatever environment we desire; this includes providing visual replication (realistic shading, shadows, reflections), auditory (3D spatial sound), interaction (multiple people - real and unreal), haptic feedback (feeling the weight, resistance, and forces in a virtual world), physical responses (things moving and reacting to physical laws), and behavioral responses (things moving and reacting to behavioral patterns).

Research Interests

My research interests in this area include the following topics:

- Real-time Rendering - real-time rendering using ray-tracing and other types of global illumination

- Ultrasonic Haptic Devices - haptic feedback using channeled ultrasonic sound

- 3D Spatial Audio - rendering of realistic audio based on the position of 3D emitters and the environment; including multi-person HRTF

- Procedural Audio - rendering of realistic audio based on material properties, rather than prerecordings

Architecture

Architectural structures are becoming more and more complex, with the designs being more elaborate - therefore by using 3D modelling and animation tools we are able to model and test much more complex designs, even to the point of development dynamic structures

Projects

- Dynamic Architectural Structures Project (PI: Prof. Baez), 2008 - 2009

Motion Capture

Motion capture involves the capturing of limb or surface information in order to apply or represent this in a 3D environment, world, or scene.

Research Interests

- Full Body Motion Capture - for medical analysis, including gait

- Facial Animation - for realistic animation fo the face; especially in real-time

Automotive

Many studies concur that on average 80% of all vehicular related accidents occur when a driver is distracted; other studies conclude that the top five causes, in descending order, for this distraction are (1) using/dialling a mobile phone (2) adjusting radio/cassette or compact disc players (3) adjusting vehicle/climate controls (4) eating/drinking (5) passenger/child distraction.

Driving safety can be linked to the fact that driving is a multi-tasking challenge. The driving task itself is straightforward and does not introduce increased workload to most drivers. However, once the driver is engaged with additional tasks even as simple as adjusting and interacting with the sound system, air-conditioner, mirrors, lights, or any other function and control, these become additional concurrent tasks. Empirical research has demonstrated that while one is engaged in a primary task (e.g., driving), doing additional tasks can constitute an interruption that becomes disruptive. The main reason researchers suggest for the adverse impact of interruptions is that user attention is a scarce resource, and users are susceptible to interruption overload. The nature and timing of interruptions are important in determining whether the interruption will be disruptive or actually task enhancing. This is tightly linked to the claim that one of the largest causes of automobile accidents is driver distraction, and the interruption of the primary driving task constitutes such distraction.

Research Interests

My research interests in this area are focused on the development of user interfaces for automobiles that drastically reduce driver distraction